LLM Hallucinations: Why Models Invent Facts Confidently

Written by - Millan Kaul

Title: What is Hallucination in LLMs?

Why LLMs confidently invent facts, code, or references—and how testers catch them.

WHY?

For Developers and SDETs

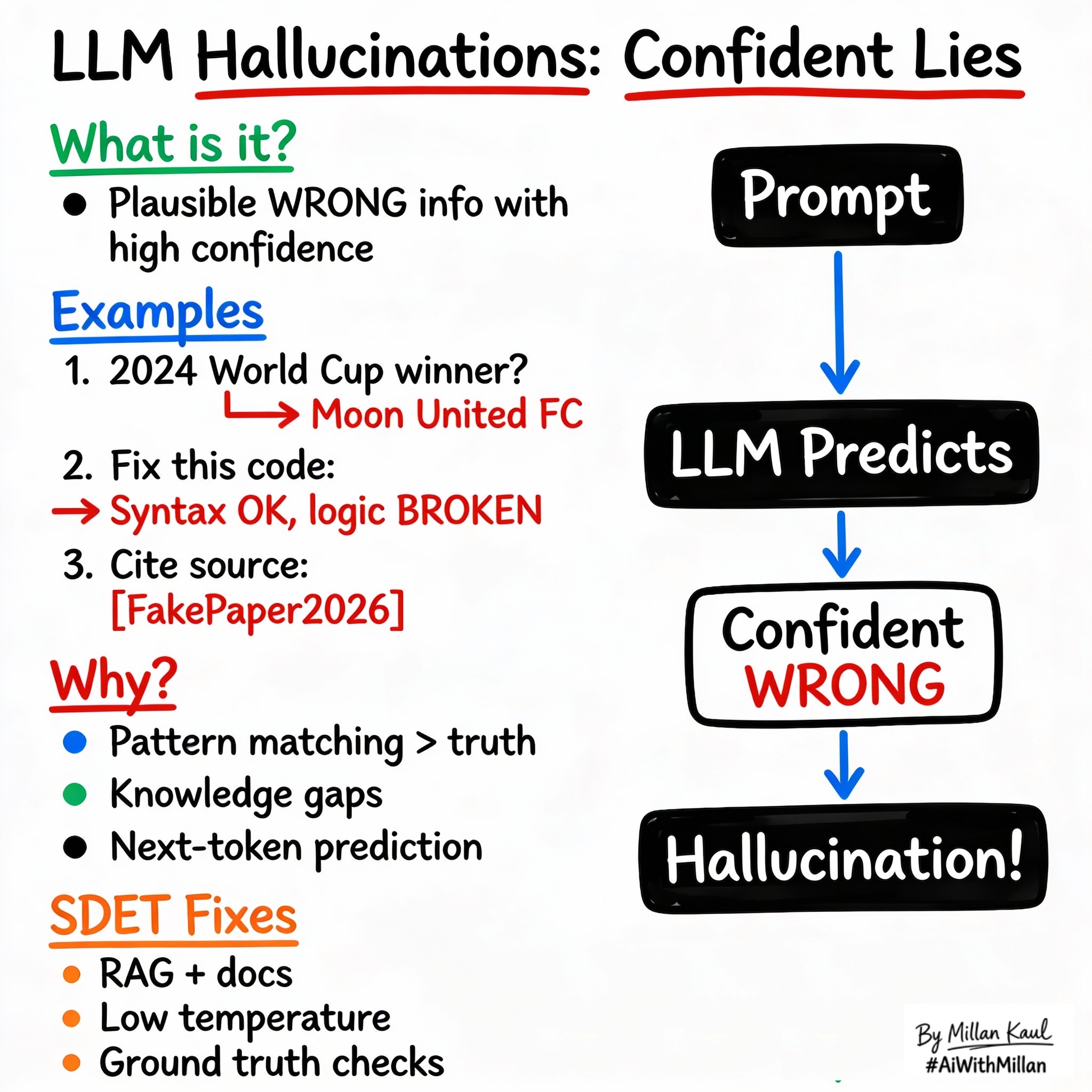

- Hallucination = model generates confident but wrong information (facts, code, citations), creating silent failures in test generation, summaries, or automation.

- Every LLM tester’s job #1: detect, measure, and mitigate hallucinations before they reach production.

From a Leadership view:

- Hallucinations are the #1 production risk for LLM apps—wrong advice, fake citations, or unsafe code can damage trust overnight.

- Proactive hallucination testing = competitive advantage in AI reliability.

WHAT?

- Hallucination occurs when LLMs produce plausible but factually incorrect outputs with high confidence, often due to pattern‑matching over memorized knowledge.

- Types: factual (“Moon landing in 1969? No, 1972”), contextual (misinterpreting prompt), fabricated (inventing sources/references).

- Root causes: statistical prediction (next likely token ≠ truth), knowledge gaps, overgeneralization.

Take these concrete examples:

- Prompt: “Who won 2024 World Cup?” → “Moon United FC” (pure invention).

- Code: “Write login function” → Syntax‑correct but logically broken auth.

- Citation: “Per [fake paper 2025]” (doesn’t exist).

From a Leadership view: Hallucinations look convincing—that’s the danger. Humans spot them; scale requires automated detection.

WHEN?

Hallucinations happen most

- Knowledge beyond cutoff (post‑training events).

- Ambiguous/edge prompts (rare scenarios).

- High temperature (randomness amplifies errors).

If you are a Leader consider: Open‑ended generation (summaries, Q&A) vs constrained output (JSON classification).

Low hallucination risk

- Highly constrained formats (JSON schemas).

- RAG with fresh docs.

- Low temperature + verification.

For leadership: Never trust unconstrained generation in customer‑facing systems.

WHERE?

Think about where hallucinations hide.

- Test generation: Invented edge cases that don’t apply.

- Log analysis: Fake root causes from pattern‑matching.

From a Leadership angle: Customer outputs—chatbots, reports, recommendations where wrong info = churn/liability.

Concrete examples:

- “2024 election winner?” → Confident wrong answer (pre‑training cutoff).

- “Fix this code” → Syntax‑OK but logically broken.

- “Cite sources” → “[Author2026] says…” (future paper).

HOW?

1. Conceptual steps to detect/mitigate

- Ground truth checks

- Compare output against known facts/docs (automated where possible).

- Confidence scoring

- Low probability tokens = higher hallucination risk.

- RAG integration

- Force model to cite real retrieved docs.

- Guardrails

- “If unsure, say ‘I don’t know’”; structured output.

2. Examples

- Detection: “Source?” → refuses/makes up → hallucination.

- Simple fix: “Use only provided context” + real docs.

- Eval metric: “Factual accuracy % on 100 known questions.”

3. Testing mindset

- Hallucination benchmark: 50‑100 questions with known answers.

- Temperature sweep: measure hallucination rate vs temp.

- From a Leadership view: Track “hallucination incident rate” like bug escape rate.

Reference

- Shelf.io – “LLM Hallucinations Guide” shelf.io

- IBM – “Managing LLM Hallucinations” ibm.com

- Prompting Guide – “Hallucination Mitigation” promptingguide.ai