Attention Mechanism: How LLMs Decide ‘What Matters Most’

Written by - Millan Kaul

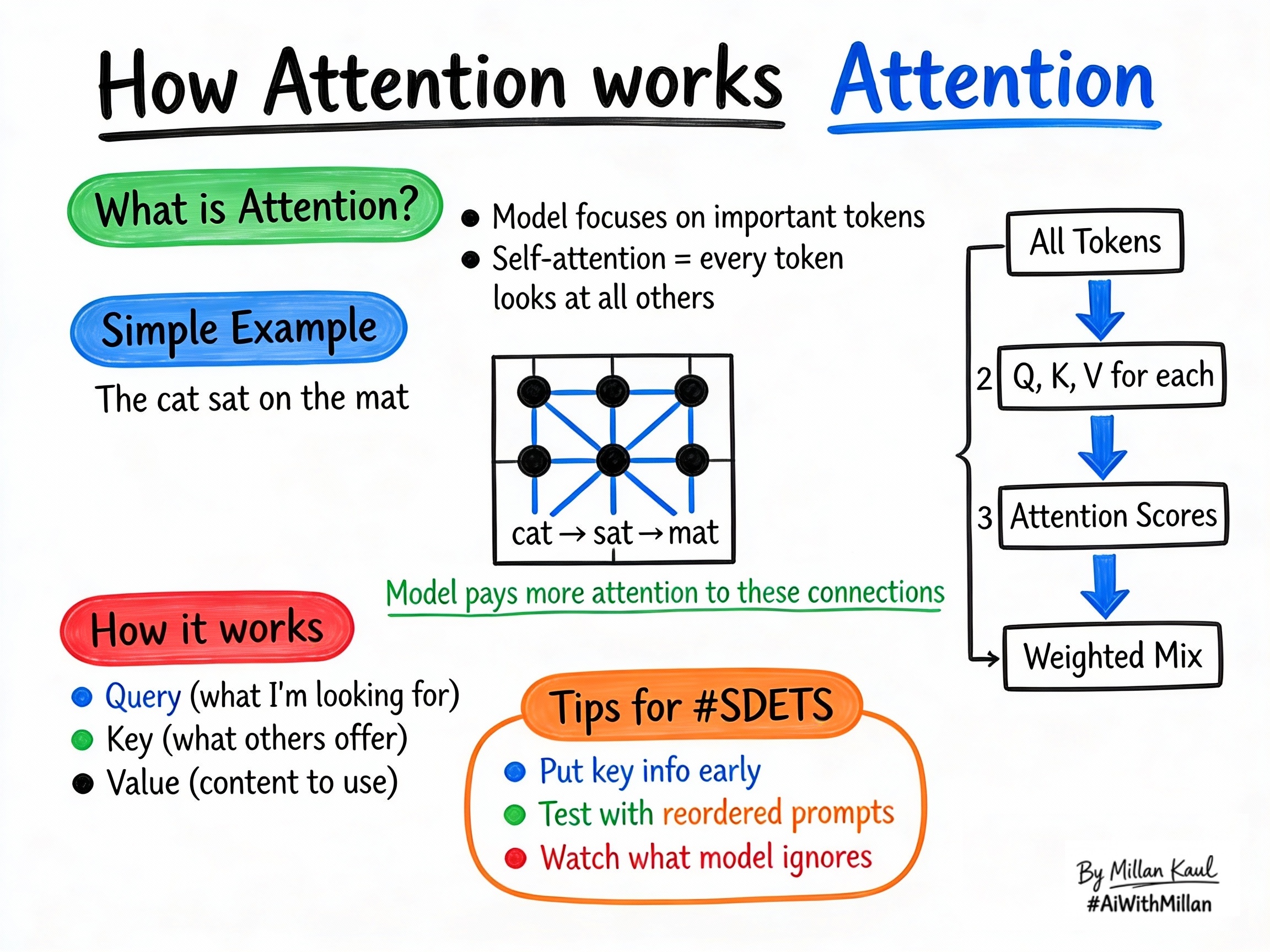

Attention explains how an LLM decides which words or tokens matter most when generating a response.

WHY?

- Prompt behavior: attention tells the model which parts of a prompt matter for each answer, so it is key to understanding why some instructions are followed and others are ignored.

- Test design: when testing long prompts or nested rules, attention affects whether the model treats the right words as important.

WHAT?

- An attention mechanism lets a model assign different weights to different tokens when producing each output token. Some tokens influence the next output more than others.

- Self-attention means every token looks at all the other tokens in the input, including itself, to decide which ones matter most for the current output.

- The model computes attention using three vectors per token: Query (Q), Key (K), and Value (V). Query-Key similarity gives attention scores, which weight the Values.

Concrete examples:

- In “The cat sat on the mat”, when predicting “sat”, the model pays more attention to “cat” than to “the.”

- In “She put the book on the table because it was heavy”, attention links “it” to “book,” not “table.”

Quick rule of thumb: attention tells the model where to focus inside the input.

WHEN AND WHERE?

When attention matters most

- When prompts include multiple instructions (“do X, but not Y, unless Z”), attention decides whether the model prioritizes the right constraint.

- In multi-turn conversations, attention controls how much past context influences the next response.

- When summarizing long documents, attention quality affects whether key details are preserved.

Where attention appears

- In transformer layers of every modern LLM. Each layer has multi-head self-attention, with different heads focusing on different patterns.

- In debug views such as attention heatmaps, which show which input tokens the model considered important.

- In system behavior: attention is what lets models track earlier parts of long prompts and preserve key context.

Practical examples:

- “The cat sat on the mat” — when predicting “sat,” attention links “cat” and “mat” more strongly than “the.”

- A long incident report where attention focuses more on root cause lines than boilerplate text.

- A safety prompt containing “NEVER reveal secrets” should keep that phrase highly weighted even if it appears later.

HOW?

Practical steps

- Each token becomes Q, K, V

- The model converts each token embedding into three vectors: query, key, and value.

- Compute attention scores

- Each token’s query is compared with every other token’s key; higher similarity means more attention.

- Turn scores into weights and mix values

- Scores go through softmax to become weights that sum to 1, then a weighted sum of values builds a context-aware representation.

- Repeat across heads and layers

- Multi-head attention lets different heads look for different patterns, and stacking layers captures deeper relationships.

Real examples

- “The cat sat” prediction: when predicting “sat,” Query(“sat”) finds high similarity with Key(“cat”) and Key(“mat”), so it pulls more “cat” and “mat” context into the representation.

- Pronoun resolution: “She dropped the glass because it broke” — attention links “it” strongly to “glass,” not “she.”

- Business rule testing: prompt “Follow policy X unless condition Y. Input Z.” depends on attention to weight “unless condition Y” heavily.

Testing mindset

- Test position sensitivity: does reordering critical instructions change behavior?

- Test constraint respect: does the model still follow safety rules buried at the end of long prompts?

- Use contrast tests: same content with different ordering or emphasis; unexpected flips indicate attention issues.

For Leaders

Why attention matters: it determines whether the model focuses on the right instructions and context, especially in long or complex prompts.

Where to watch: check whether constraints, safety rules, and business logic remain influential as prompt length grows.

Risk management: attention problems can make models ignore important clauses or context. Add “does the model focus on the right part of the prompt?” to your AI risk checklist.