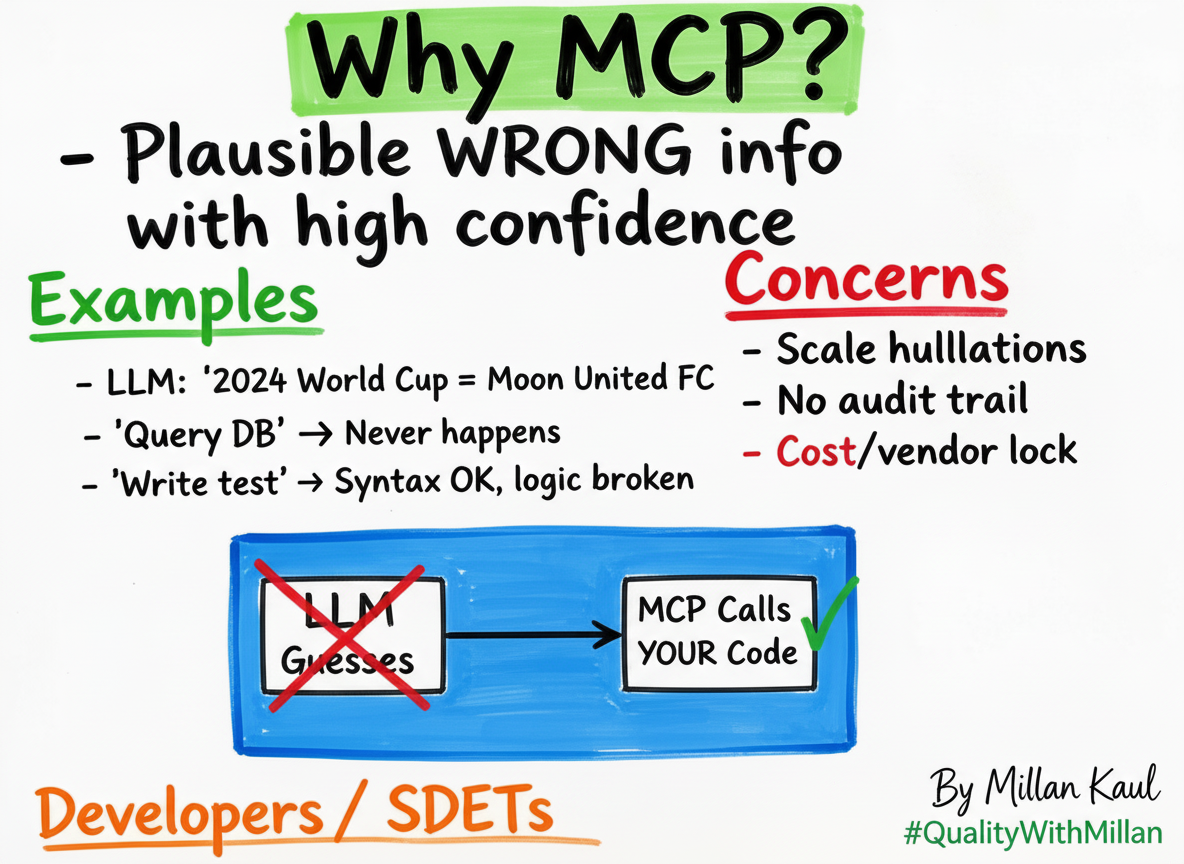

Why MCP?

“Last week my LLM swore the 2024 World Cup winner was ‘Moon United FC’.

It was confident, detailed, and 100% hallucinated.”

MCP doesn’t fix hallucinations. It makes them impossible by forcing LLMs to call your code instead of guessing.

The Core Problem

For Developers/SDETs:

- LLM says “I’ll query your DB” → Never actually does it.

- “Write a test” → Syntax‑OK, logic‑broken.

- “Analyze these logs” → Makes up patterns that don’t exist.

Leadership view: “Scale needs determinism. Hallucinations scale too.”

What MCP Actually Solves

MCP = “USB‑C for AI tools”

Instead of LLM guessing → LLM calls YOUR Python/Node.js function:

# Python - FastMCP

from fastmcp import FastMCP

mcp = FastMCP("banking-server")

@mcp.tool()

def get_user_balance(user_id: str) -> float:

return db.query(f"SELECT balance FROM users WHERE id = ?", (user_id,))

Result: No hallucination, real data, your business logic.

Developer Wins

- No more “fake APIs” – LLM calls your actual code

- Testable – unit test your MCP tools like normal functions

- Local dev – VS Code + Ollama + MCP = 100% offline

- Versioned – v1/tools vs v2/tools = controlled evolution

Leadership Wins

- Audit trail – Every tool call logged, no black box

- Cost control – Local MCP servers = $0 inference

- Vendor neutral – Swap Claude ↔ Llama ↔ GPT without rewriting

- Compliance – Your code controls PII/data access

Concrete Example

Concrete Example

WITHOUT MCP: “Get Q4 sales numbers” → LLM generates approximate/estimated figures

WITH MCP: “Get Q4 sales numbers” → LLM calls: get_sales(“2025‑Q4”) → Returns: Exact database result

Call‑to‑Action

“Stop debugging LLM hallucinations. Start shipping MCP tools.”

References