QA Toolbox: Powering Reliable AI Outputs

Written by - Millan Kaul

QA is not disappearing in the AI era. It is becoming the layer that makes AI outputs reliable, testable and safe.

Why QA still matters

- AI-native teams move fast, but speed without quality creates risk.

- Prompts can drift.

- Outputs hallucinates.

- Guardrails may get skipped.

That is where QA and quality engineering matter more than ever

In AI systems, QA is not just about finding bugs. It is about checking behavior, validating outputs and protecting users more than ever.

But the question is : Will my old toolbox work ?

Here is my attempt to answer exactly that..

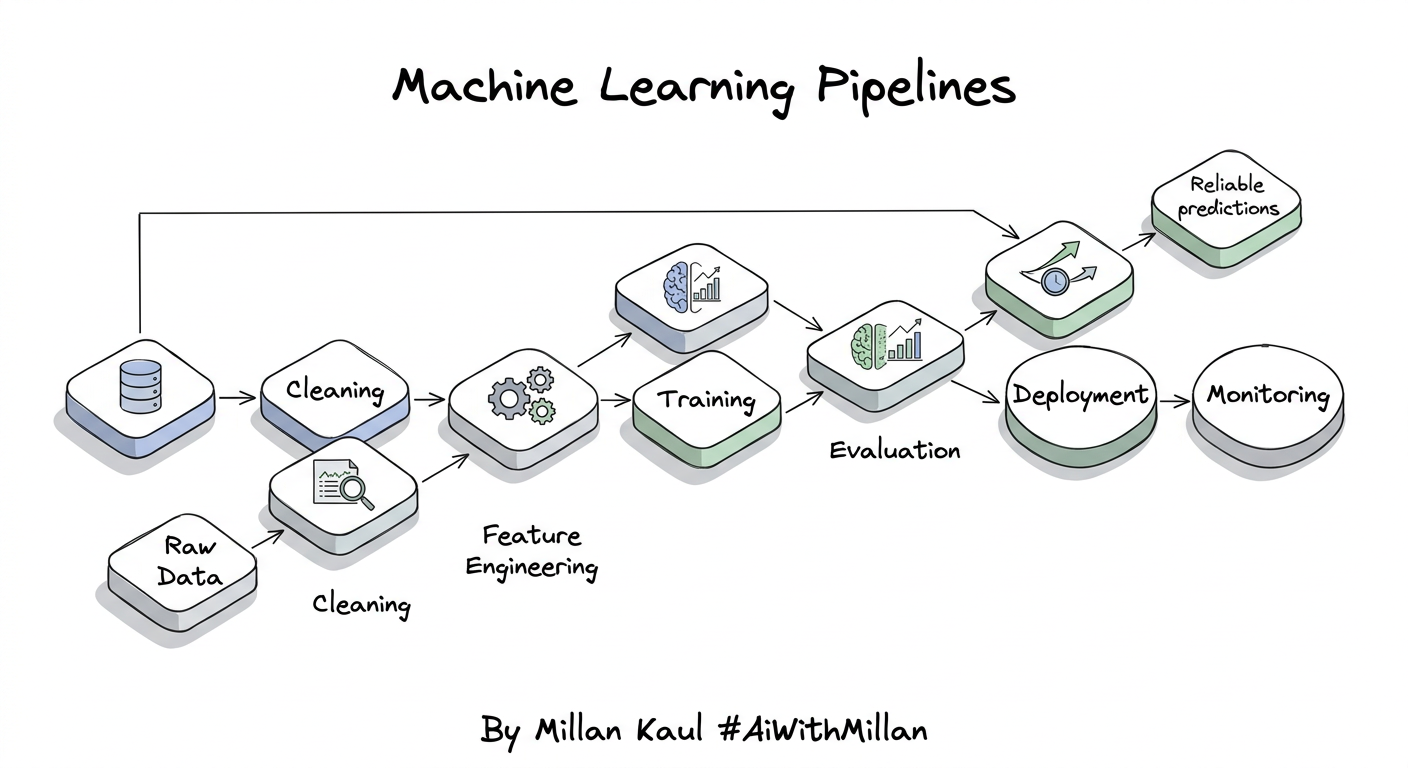

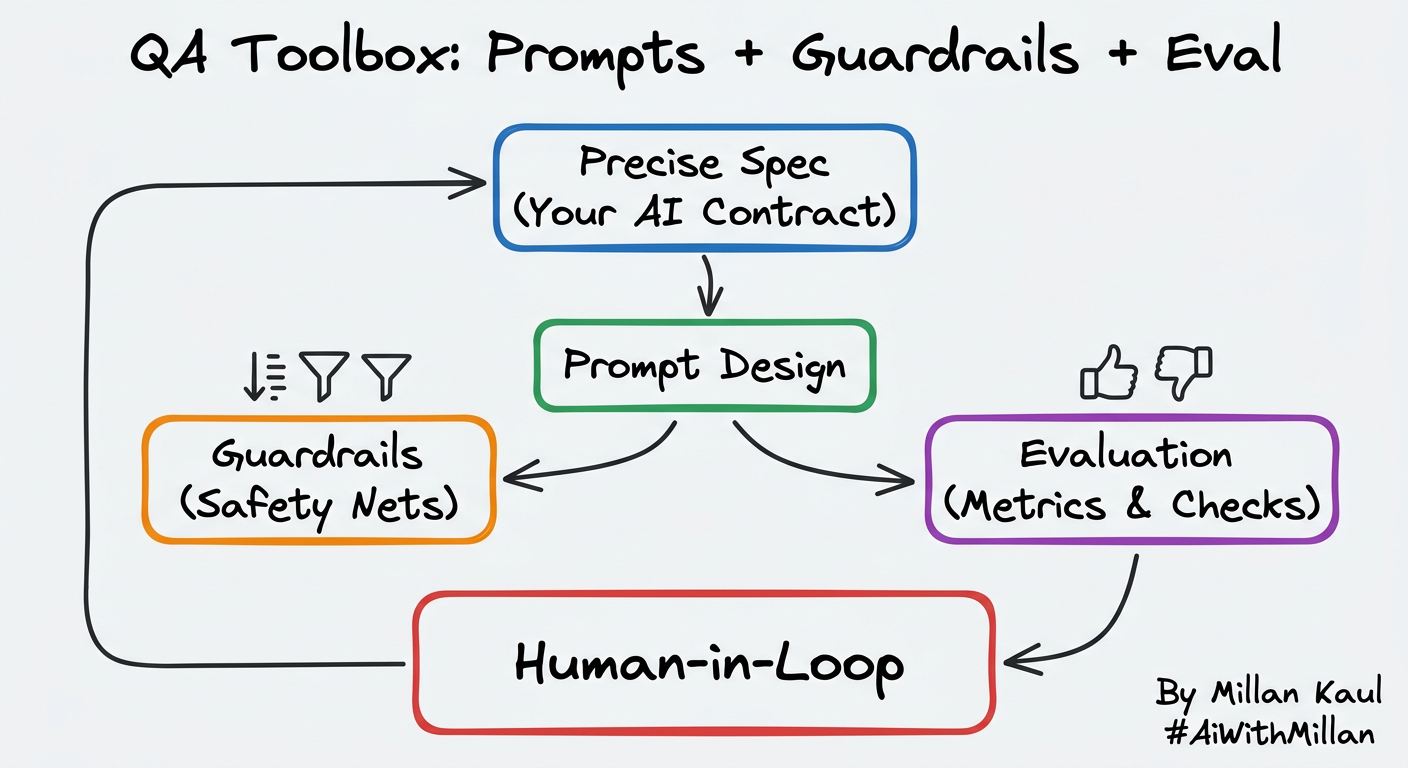

The QA toolbox for AI

Start thinking of QA in AI-native products as a simple toolbox:

- Prompts define the expected behavior.

- Guardrails prevent unsafe or off-spec outputs.

- Evaluation checks whether the model actually did the right thing.

- Human-in-the-loop review catches the edge cases.

This is the new QA stack for AI products.

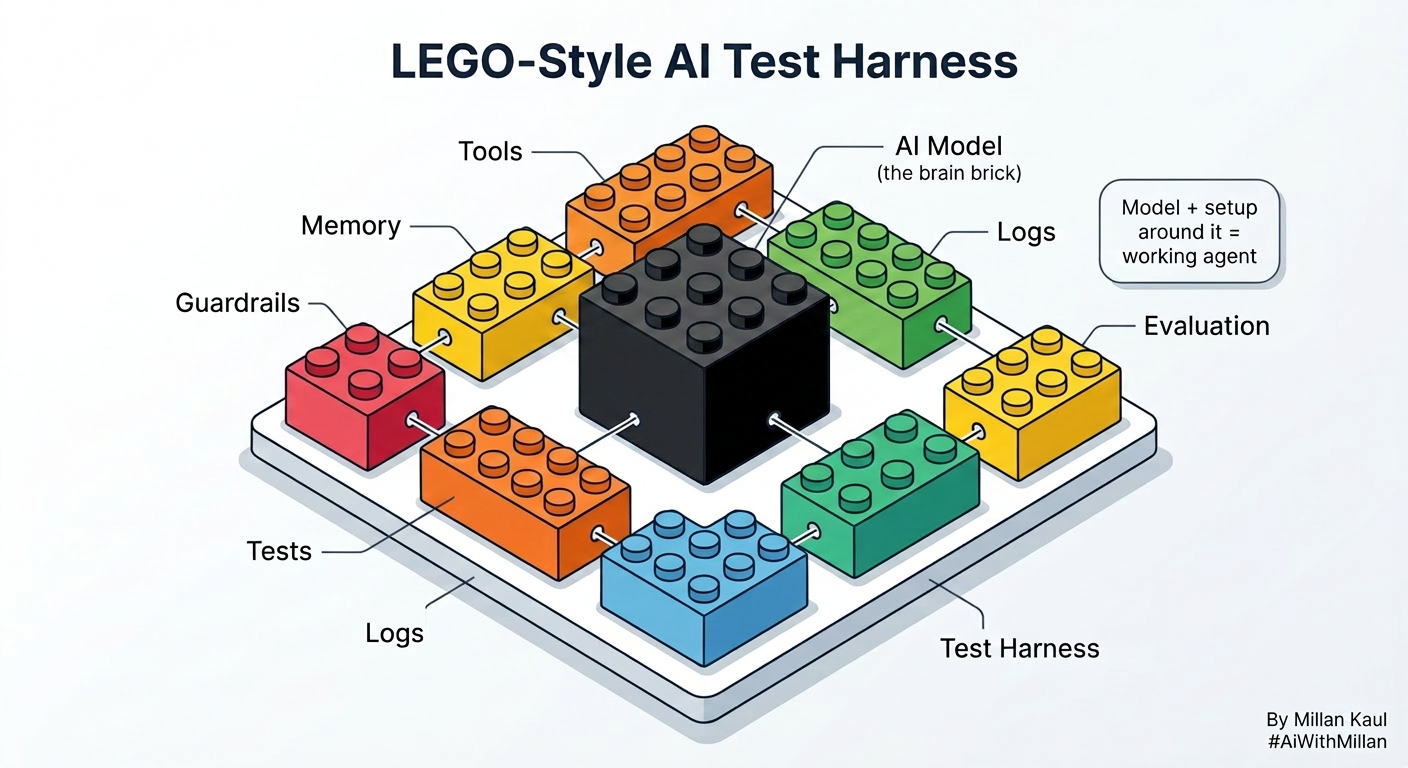

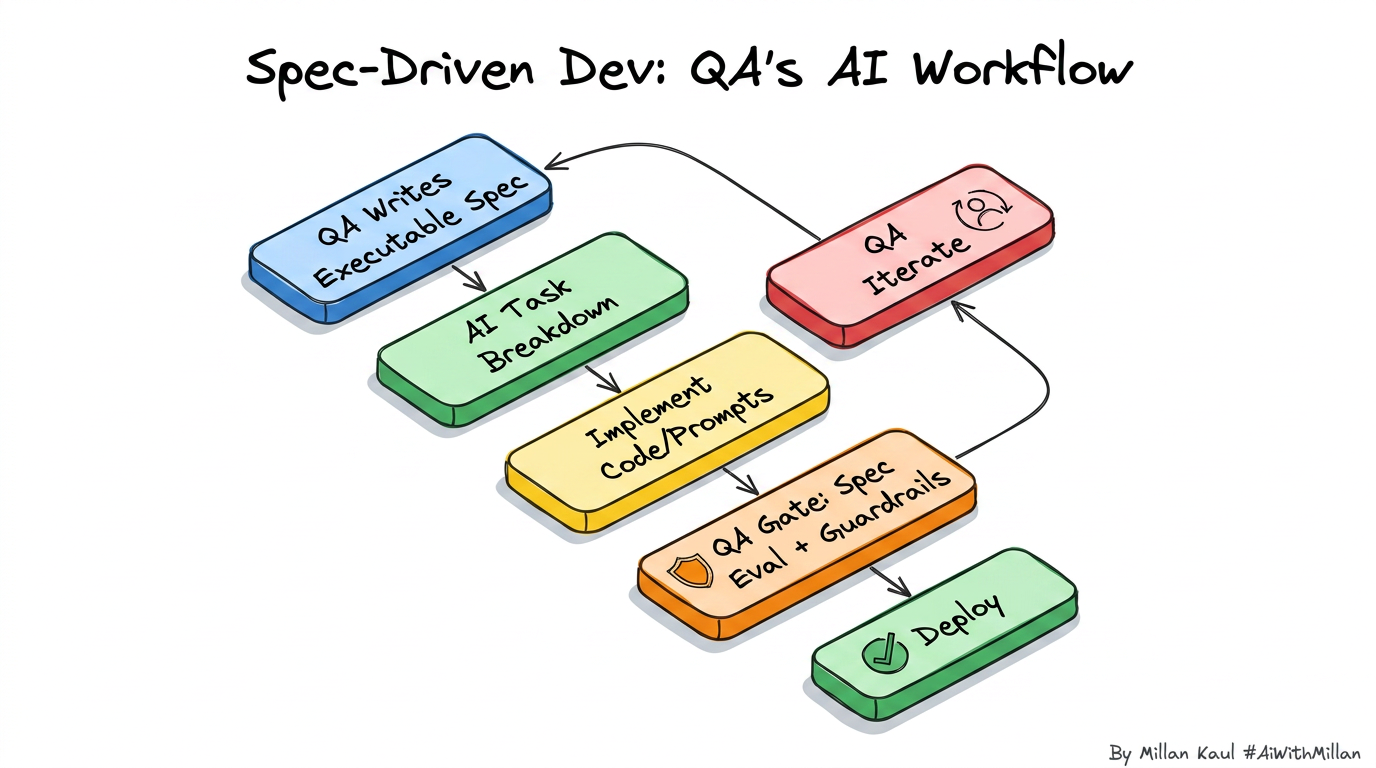

What about Spec-driven development, what are my tools for that ?

In spec-driven development, the spec becomes the source of truth.

QA should not treat specs as documentation only. They should be treated as testable contracts.

That means:

- Define what good output looks like,

- Define what must never happen, and

- Test against that every time.

Sample code: PII guardrails with NeMo Guardrails

NeMo Guardrails supports PII detection and masking in input and output flows through its Private AI integration.

config.yml

models:

- type: main

engine: openai

model: gpt-4o-mini

rails:

input:

flows:

- detect pii on input

output:

flows:

- detect pii on output

config:

privateai:

server_endpoint: http://your-privateai-api-endpoint/process/text

input:

entities:

- NAME_FAMILY

- LOCATION_ADDRESS_STREET

- EMAIL_ADDRESS

output:

entities:

- NAME_FAMILY

- LOCATION_ADDRESS_STREET

- EMAIL_ADDRESS

Python

from nemoguardrails import RailsConfig, LLMRails

config = RailsConfig.from_path("./config")

rails = LLMRails(config)

messages = [

{"role": "user", "content": "Hi, I am John Doe. My email is john.doe@example.com"}

]

response = rails.generate(messages=messages)

print(response)

Private AI can detect or mask PII in input, output, and retrieval flows, and the docs note that detection blocks text from passing through if PII is found.

What to test

For AI products, QA should always check:

- Does the prompt produce the expected structure?

- Does the model stay within policy?

- Does the output leak PII?

- Does the answer remain useful under edge cases?

- Does human review catch what automation misses?

Those are few of the ways for QAs to stays relevant in AI-native teams.

Closing thought

AI does not replace QA. It makes QA more important. [May 2026]

My personal take: The teams that win will be the ones that test prompts, guardrails, and evaluation as seriously as code.